Getting map directions is easily one of the best features and use-cases for Google Glass. Seeing turn-by-turn directions at the corner of your eye when you’re out and about is one of the simple pleasures of wearing a computer on your head.

Unfortunately the only way Google provides to start navigation is with speech recognition which fails more times it works. Even though Glass’ speech recognition works well enough for simple queries like “Pizza Hut” or “62 King St”, it stumbles on more complicated place names and addresses (especially with an Australian accent). Of course there’s also the problem of sound like a crazy person yelling addresses on the street.

Needless to say this problem has been frustrating me for weeks and because I had so much fun developing my first Google Glass app, I knew I could solve it too.

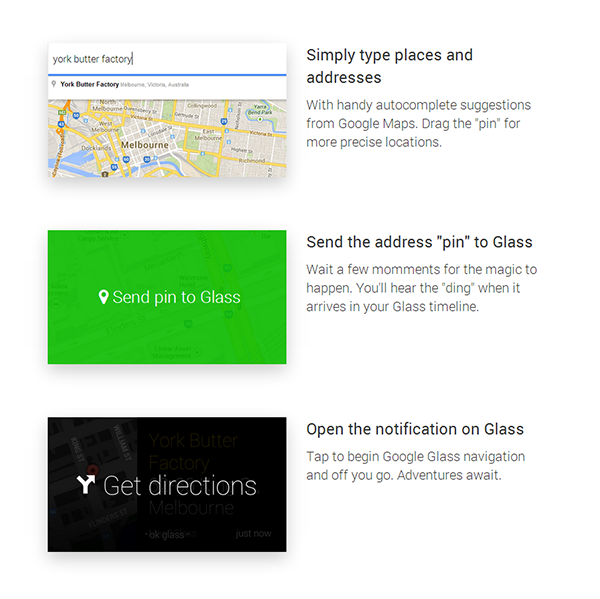

The solution had to be typing, but you can’t type on Glass. So the next best thing was to type in a browser or on your phone, then send the address to Glass, like ChromeToPhone. Thankfully, the Glass Mirror API allows you to send content with a geolocation latitude/longitude and a “NAVIGATE” action for this exact purpose.

So over the Valentine’s Day weekend, I decided what better way to spend a romantic evening than with the Mirror API, PHP, SQL Azure and the Google Maps API. After a few hours of trial and error, Map2Glass.com was born.

It’s a simple website that lets you login with a Google Glass account and opens a map view with an autocomplete search box at the top. Google Maps’ v3 API makes this almost too easy. A “Send to Glass” button then takes the latitude and longitude of a pinned address (along with some other metadata), formats it to a Glass Timeline card and sends it to the Mirror API. Once received on Glass, a simple tap begins navigation to the embedded location.

I threw the code on Windows Azure Web Sites, bought a domain and started spreading it around. On a post in the Google+ community of Glass Explorer users I got a comment which was very fitting for Valentine’s Day and it made it all worthwhile.

What this “phone-to-Glass” workflow has taught me is that even though I strongly believe wearable computing is the future, simple and precise tasks like typing can be perfectly complimentary to the wearable experience.

What’s the exact reason you put a red circle around the “I love you” comment?

I’m lonely 🙁

I want to +1 this comment lol

but yeaaah – thank you so freaking much!

Thу map2glass.com page is not working now(((( can you bring it back alive?

we miss this app badly on Google Glass