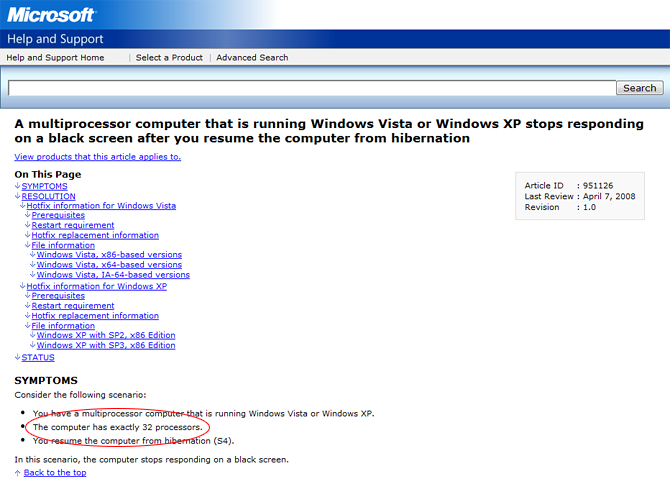

It’s not uncommon to come across a patch for a software problem you’ve never heard of or thought was possible. In most cases they’re caused by an unconventional use-case or a bad combination of hardware/software configurations. Sometimes the symptoms are so exceptional it makes you ponder about who in the world would be affected by it in the first place. I wouldn’t be surprised if in this case it was just one person. The Microsoft KB article speaks for itself.

I beg to ask, who discovered the problem, what was the fault, who uses a consumer operating system on a supercomputer and what would happen if you had 31 or 33 processor?

ROFL, I’d like to see how many pc’s have 32 processors.

Maybe really fast servers.

M$ does it again….now thats hilarious!!

i have no clue how you find these things….:)

peas

cityboy

Wow lol …

exactly who has 32 processors??? except intel???

i’m sory but….. hahahaha

that was so funnyyyyyyy !!!!!!

Here’s what I want to know, and its to know with their file information.

1) They’ve listed “Windows Vista, IA-64-based versions”. As far as I was aware, there never was and never will be an Itanium version of vista. Wtf?

2) Vista Enterprise and Vista Ultimate both support only up to TWO CPUs. How can a 32 processor bug affect Vista?

@Andrew, the two-CPU (physical CPUs, no limitation on the number of cores) is a licensing restriction, not a technical limitation.

Man this has been said so straightforwardly.

Congratulations on this inventions Long, I envy you.

@Soum

Thanks. Not actually having two processors, all I can go with is what it says on the paper until I actually try it with more than two processors. Thanks for clearing it up. Still leaves the Vista-Itanium edition unsolved though 🙂

that black screen post hibernation resume thing has happened to me on an XP machine before… and it didn’t have 32 processors.

heck, that machine had a single-core CPU!

Probably that’s what went wrong at Intel’s IDF with their Nehalem boxes – I think they were 32-logical-processor boxes. 2 CPU packages, 8 cores per package, 2 logical threads per core.

As for Vista on Itanium, Windows Server 2008 supports Itanium and Vista SP1 and Server 2008 share the same kernel.

AAAAAAAAAAAAAAAHAHAHA

Microsoft is gaining a reputation for its KB articles. http://support.microsoft.com/kb/276304

Ha! This solves all my problems. Thank you, Long! 😉

=============================================

exactly who has 32 processors??? except intel???

=============================================

Well, now that we are at it. I am one of the guys who got more than 32 processors.

But they are not all on the same mobo.

I’m sure they mean 32-Bit processor or something, otherwise that’s just plain ridiculous lol

The question is: WHO is criminal enough to put Windows on THAT kind of computers?!

(you’ll read this in two or three years and laugh when Windows Seven requirements will be “at least 16 CPUs”)

Windows with 32 processors it’s useful to run those heavy VB programs we all used to do/use.

@ Tom…..lol thats even funnier!

peas

cityboy

The bigger question is who hibernates such an expensive rig.

Trying to get everyones attention again by adding the KB article’s title:

Error Message: Your Password Must Be at Least 18770 Characters and Cannot Repeat Any of Your Previous 30689 Passwords

http://support.microsoft.com/kb/276304

Funny stuff – and a pretty amazing find.

maybe it was a typo and it should be 2-processor

This is April Fools’ Day Joke – look at the timestamp of Halacpi.dll 😉

Cheers, Roman

@Himanshu Jain:

a better question would be why would you ever need to power that machine down?

i’m sure anything with that much memory would have at least 4 cores behind it, and do nothing but run VMs.

@doknir:

the timestamps for all the files are April 1st!

> the timestamps for all the files are April 1st!

Indeed.

BTW, patch for Windows XP with SP3 is also available: It’s build 5573 (5.1.2600.5573).

Will this patch work on my 64 processor computer? 😉

I wonder how FSX would run on your 32 processor computer?

“i’m sure anything with that much memory would have at least 4 cores behind it, and do nothing but run VMs”

No mention of memory, and i’m pretty sure it has exactly 32 cores behind whatever memory there is 😉

@Orion:

forget that! gimme Crysis on that baby! 32 processors and a wicked graphics card array…. i think i’ve just found the machine outside Crytek that can run Crysis!

unless that IS the only machine that can run Crysis… 😉

Hi all.

32-processor system on one M-board actually exists.

i have a picture of it in one of my university books “a couple of years old which am having a hard time finding it right now”

the picture shows “if my memory’s correct” up to 32-processors on a single board.

pretty interesting don’t you think 😛

Patch applied. Now my notebook is safer and more stable 🙂

@Freddy: Must be a big notebook! A 42 incher?

how could ne 1 aford sever with this type of hardware to go into hiberanation (bearing inmind they go spack wiht ovr 3 gigs of mem thought it was disabled(oh was it ptchd last month 2 fix that/ or wsnt itpointed out ?))…not that i can spell or even be arsed to correct it) but guess if u got that much power u can let them sleep and and reawake u got to much money…

think that was only32 bit os’s (asssuming u would need mroe than 4gb for than many proc// ) but still better off lettng the 1’s with 16 proccessors (or less) sleep (title says prccessrs not cores). but nice 2 no there is some1with this problem…. shame for all those peopz who still cant get various things to work under vista…. (not allways ms fault)

i think they just forgot a “/” and meant 3/2 processors.

HPC Systems and Microway both make 32-core (8 socket, quad core) systems:

http://hpcsystems.com/workstationaw800.htm

http://www.microway.com/8wayopteron.html

No idea why a person would want to run XP or Vista on one though.

“31 or 33 processors”? You dare to even ponder running a number of processors that’s not a power of two? Begone, sinner!

crap… i’m out of luck, i’m having that same problem on my computer that has 64 processors… -=p

I think that the KB may really refer to 32 processor workstations, that someone would expect to run WIndows XP (or possibly Vista I suppose) on.

Opterons support 8 sockets on a motherboard, and now that they have quad core Opterons, that means you can stick 32 cores onto a single motherboard. At a minimum, Tyan and SuperMicro both make 8 socket motherboards. I suspect these are mostly found in large servers.

There is, however, a demand in certain fields for workstations like this. For instance scientific visualization or film visual effects would both justify machines like this. Basically anyone who used to buy SGI Onyx workstations would possibly be a candidate for this sort of hardware.

A company called BOXX make such an Opteron system specifically for desktop use. The machine, called the Apexx 8, will support 32 processors, 256 Gigs of RAM, and currently 15 Terabytes of storage, in a desk side chassis. While certainly a lot of work is done on regular PCs (or Macs) and render farms built from server machines, there are times when it is worth the money to have one big bad hero box that can beat shots into submission against a tight deadline.

It’s interesting for sure since unless someone has invented 16-core CPUs there’s no way for Vista or XP to actually use 32 CPU cores, since more than 2 physical CPUs won’t be used.

You ask about 31 or 33 CPUs; 33 would be impossible since the technical limit for CPUs on 32 bit Windows is 32. This is because thread CPU affinity is handled by a bitmask which is the size of a CPU word. In fact, I suspect that this bug has something to do with this being the maximum possible number of CPUs.

Which in turn makes me wonder whether this problem exists on the 64 bit versions of Windows if you have 64 CPUs (which is the technical limit for 64 bit Windows).

http://istartedsomething.com/20080408/cant-resume-windows-32-processor/#comment-59195

“This is April Fools’ Day Joke – look at the timestamp of Halacpi.dll

Cheers, Roman”

and the timestamps on everything else…

if you have Vista running in a 32 processor machine, you have 32 times more chances to crash, that’s why vista is more likely to crash in such a machine. Even not existing 32 processor machines yet, M$ is ahead of its time and is preparing vista to crash when such machine exist.

Learn to speak English properly before making such ignorant, annoying, and bias statements, ‘MIKE’. Thank-you.

This one is nice:

http://support.microsoft.com/default.aspx/kb/281923

=)

…more interestingly…this patch worked for me, joking aside.

32 way servers are actually not that uncommon, there’s plenty of datacenters around utilizing such huge computers,

although most of them run a unix variant.

nos: How many are running Windows XP or Vista on a computer with 32 processors? 😛

Ok, this is a weird bug, but there are lots of people out here that have fairly massive setups like this. Outside of the college kids and 733t teens modding mommy’s desktop, things like this exist, and are more common than apparently people realize.

Also surprised to the responses questioning why anyone would install XP or Vista on such a machine. Does everyone forget Windows is NT based? NT still is one the most highly regarded kernel technologies in history. And when it comes to scaling and multi-processor usage XP was good and VIsta soars on ‘high number’ CPU configurations.

You will find kernel technologies like a straight MACH or a monolithic BSD interface don’t do well in large CPU configurations. Additionally, Linux is a heavy microkernel and does better with SMP scaling, but can’t quite achieve the results of a hybrid kernel technology like NT.

Peopple that use systems like this commonly will install a high performance OS Kernel like Vista or XP or Windows Server, and then install the UNIX subsystem to work with non-Windows design and engineering. The stability and performance of the NT core is the key ingredient here. And the MS UNIX subsystem running concurrently with WIN32/WIN64 is a nice complement to people doing *nix work in addition to easy development and testing projects in the Win32/64 subsystems.

People really need to get off the mindset that Windows = Crap kernel. This was true of the DOS/Win9X kernels of the 90s, but the NT kernel was designed by the best UNIX designers and theorists of the time, that could have made NT UNIX based (MS owned XENIX in case they wanted to make it UNIX). Instead the designers took the best theories and designs to make NT and let go of UNIX because of the inherent flaws in the UNIX model.

For example:

The ACL security in the NT kernel is object/token based.

NT uses a object oriented based I/O model, instead of the UNIX style I/O

NT doesn’t have limits when it comes I/O agnositc concepts of UNIX

NT is also a client/server kernel technology as the NT OS runs underneath the Win32/Win64/Posix/UNIX subsystems, and each subsystem acts like an independant OS with intercommunication abilities through the NT kernel. This is how and why MS’s vision of Hypervisor and virtualization technolog rocks, because they have been doing this for years, and by using the NT client/server model with the hypervisor type of design, NT has so many OS and virtualization options it becomes the perfect core OS to manage all client OSes.

NT is far more extensible because of the client/server kernel nature with light API layers.

Just like NT 4.0 and again in Vista, MS ripped out and redesigned the entire video driver system, and in Vista left a hybrid kernel/user mode driver model that didn’t require any rewriting of NT itself to attach the new system to the NT kernel/architecture. In contrast doing this in OpenBSD or Linux would have required major rewrites in the kernel, where this is agnostic to NT and both models plug in at the same time transparently. This means Vista can use the XPDM or the WDDM without blinking an eye or NT having to deal with the changes as this is handled in offset APIs at a higher level, being the nature of the Hybrid Kernel.

Anyway, I know it is popular to slap Windows around, but most people do this based on concepts or beliefs of the Win9X era of Windows, not the WinNT/2K/XP/Vista era of OSes, that do have some solid underpinnings that still outshine most other OS kernel architectures.

@TheNetAvenger – Great post. Do you have a blog or something? Would love to read more of your stuff.

On a side note, 32 processors and using Hybernate? Servers (generally the computers that would have 32 procs) generally don’t turn off if they can avoid it…

The only reason why one with such a machine wouldn’t use Vista or XP, is because they know linux based systems are so far superior. Why buy a goldmine and outfit it with Beetle juice? Usually, the only people who would are the ones who work for MS. I swear they get massive kick backs just to use MS and advertise the fact as IT pros. to non-ITs. I knew a guy who was bending over backwards to support the extremely buggy Vista. We found out he worked for them.

@ the net avanger very imformative but sticking with whati said ealryier and what Yert said is there any reason u would put a server with this much power into hibernation ? would assume u would turn off more servers with less proccessing power (more electrical power) then1 that can do more work for less if that makes sense esp ina data center enviroment ?but stil very imformative thx u

@tpgames

If I had a BOXX Apexx 8, I would probably run Linux on it.

However, there is plenty of software that one might like to use that can’t run on Linux. Adobe’s After Effects comes to mind as a program that might work well on such a Windows machine, since it is already known to scale well to 8 CPUs.

so this is what geeks find funny.

Pardon me if I am wrong, but using more than two physical possessors (not cores or virtual cores) is against the EULA for both operating systems.

@gerald

Vendors who make 16 and 32 processor workstations supply a special windows license with the system, and a special install CD that contains a HAL that will work with more sockets.

@TheNetAvenger

While this is a few days past your post, it is the largest load of unadulterated BS I’ve seen in quite a while. Hell, Windows gets beaten on workstation graphics performance of all things, and that is certainly not what UNIX excels at. Almost any SPEC result is the same — Windows is almost never the top in performance.

Firstly, BSD is not monolithic, and FreeBSD 7 outperforms Linux on up to 8-way SMP loads. Linux is a hybrid kernel (like NT). Mach is a microkernel (OS X sucks on a lot of server loads due to the amount of message passing required).

Secondly, while Windows is many things, “high performance” is not one of them when compared to operating systems optimized for server loads (SQL server aside, which performs well, but is common due to the ease of integration with Windows programs rather than performance characteristics compared to DB2/Oracle/Postgre).

NOBODY uses Vista in a server room. Nor XP, for that matter. Win2k3 is where we’re staying until 2008 gets at least one service pack. That being said, the claim that the NT kernel somehow handles multithreading and multiple processors better than UNIX or Linux is, frankly, laughable. SGI and Cray were running 32/64 processor boxes a decade ago. Take a look at <a href=”http://www.top500.org/stats/list/30/os”?the Top500. Six of the systems are Windows. Six. Two are OS X, which is famously slow for things like that. AIX (IBM’s UNIX, probably on POWER systems, maybe mainframe) has four times as many. Two are Solaris, and you can expect that number to rise now that Sun can cram 128 processors into a 2U box. One is frakking Tru64, and the last major release of that operating system was years ago (IRIX boxes were sitting up there until pretty recently, too, and IRIX has been dead for a while). HP-UX intermittently shows up on the list. All in all, some 450 of the Top500 run… Linux.

As a UNIX sysadmin, your assertion that people install Windows on a large box then install some half-baked POSIX layer and 5 year old non-compliant shell (without Perl or any of the other tools which come -standard- on any UNIX) with the full load of a GUI rather than console-based UNIX/Linux is asinine. Clearly, you’ve never seen a datacenter (or at least one at non-certified Microsoft shop). In the real world, many industries (retail, banking, grocery, scientific) run purely on UNIX for reliability. Banks run HP-UX heavily, grocery is AIX, telco is Solaris. The systems which -absolutely must not break- run UNIX, if you can tell. Hospital gear is QNX and IRIX for the most part on the backend.

XENIX died before NT was a concept, thanks. NT was designed because Microsoft needed a kernel that could support protected memory, multiple processors, etc, and they DID NOT WANT TO PAY AT&T FOR UNIX. It took almost all the concepts from VMS. There weren’t any “flaws” in UNIX they wanted to avoid that I’m aware of.

I will say that NTFS ACLs are much more granular than the general UNIX permissions system. However, all the major UNIXes (Solaris, HP-UX, AIX, Linux, OS X) support far more advanced user roles and monitoring (take a look at SELinux, Trusted HP-UX, or RBAC in Solaris for a comparison) than Windows, and filesystem permissions are hardly the defining role in security (which is really where NT has UNIX beat).

Object-oriented I/O? What does that even mean? As far as it goes, NT does not say “everything is an object.” I can’t cast a file. UNIX says “everything is a file, including devices”, and I can use any tool I want to. It’s far more “object-oriented” than NT with regards to I/O.

UNIX has no I/O limits. Who are the big NASes? NetApp (it’s UNIX on their NASes), EMC (UNIX), Sun (Solaris is clearly UNIX, and ZFS really is the most advanced filesystem out there), etc. Performance pounds Windows NASes and SANs by any benchmark you check on StorageReview.

Message passing through rings is not unique to NT. It’s in every OS since Win9x/DOS (and many before it, such as z/OS, MPE, Nonstop, UNIX, OS/2, VMS, et al). Ring 0 is not a hypervisor.

The perfect “Core OS” as a hypervisor is, by all respects, Linux (VMWare ESX is the virtualization system in the datacenter, and it’s based on RHEL). Even better is something like Logical Domains (Sun), LPARs (IBM), nPARs (HP-UX) which can partition hardware without a clunky hypervisor.

If NT is far more extensible, where are the extensions? How much embedded Windows do you see vs. embedded Linux, QNX, BSD, VxWorks, etc? Virtually none. In the real world, people do not use NT for those tasks. Beyond which, I don’t think you’ve done any development, Windows or otherwise. COM (the big Windows API) is anything but “light.”

Also, Linux/UNIX/BSD do not have a separate video driver model. A driver is a driver, and it gets DMA. X11/Xorg handles graphics and it is unbelievably versatile (as well as extremely modular). To put it this way: X11/Xorg from v6 to v7 was almost a total rewrite. A driver is a driver, and the same “nvidia” driver can drive Xsun (Sun’s proprietary X server), X11 on HP-UX, Xorg 6 on FreeBSD, and Xorg 7 on Linux. It does not take major kernel rewrites. They publish a specification. Driver developers code to the specification. It works, and the specification is stable. Even if the kernel implementation is completely rewritten, the kernel API stays the same. As neat as you may think it is that Windows has two different driver models, driver developers don’t like it as much.

In short, you are utterly wrong. Sure, cleanroom implementations of the NT kernel are flat-out amazing (the 40MB Windows 7 preview for instance). However, even in that case, it is not the ideal solution for some of the cases you’ve named (virtualization, for one). Until I can get a text-mode only version of Windows with a GOOD shell (PowerShell is good for scripting, but if I want an OO scripting language I’m headed for Python or Ruby rather than learning a new one which isn’t at all portable) with modern shell features (in short — port bash, ksh, or zsh rather than throwing away 30 years of perfecting features), it’s relegated to a few things — AD, Exchange, SQL Server, and things which need to integrate with those three.

My 32 processor servers are staying on UNIX or using hardware virtualization, thank you. Come back when you have some professional experience with high-specification servers or kernel internals.

I still have high hopes for Windows 7, and 2008 has some great new features (again, mostly for Domain controllers and Citrix stuff), but it’s not going to displace UNIX/Linux, and arguments for its technical superiority are on shaky ground.

@Ryan: NetAvenger is certainly biased towards Windows. Similarly, you’re biased towards UNIX. NetAvengers brings up graphics workstations as an example of NT’s superiority (a niche example if I ever saw one). You bring up AIX and Solaris in banks, which is more a matter of history than technology (UNIX shops don’t tend to switch to Windows any more than the reverse).

Building on VMS concepts is basically the same thing as avoiding UNIX concepts. If you want examples, go ask Dave Cutler for an interview, don’t wait for partisans to jump in with their favorite operating system feature. I refer you to http://www.codinghorror.com/blog/archives/000060.html for relevant snippets. See in particular the quote about Dave Cutler’s feelings about UNIX (admittedly second-hand, but probably fairly accurate given what we know about Dave Cutler). It rings fairly true.

How you react to the Dave Cutler quote will show your biases — UNIX folks will say, “Is this guy nuts? These PhD’s all are smarter than Dave Cutler. UNIX has got best-of-breed in every area.” Windows folks will say, “Exactly. I don’t want 37 different design philosophies on 21 programs. Windows is superior in overall consistency, even if it can be easily beat in every single area one-by-one.”

I am working on a 32-processor computer right now. The HP Integrity Superdome series of computers has 32 Itanium processors with 32 or 64 cores. I can assure you returning from hibernation is the least of your worries. Right now we are working through a driver issue that is compromising the 25TB of EMC Tier 1 storage that has been allocated to the server.

@Ryan: Linus Torvalds said that “hybrid” kernels are marketing bullcrap. He would be a hypocrite if Linux used a hybird kernel, let alone a microkernel. Linux uses a monolithic kernel.