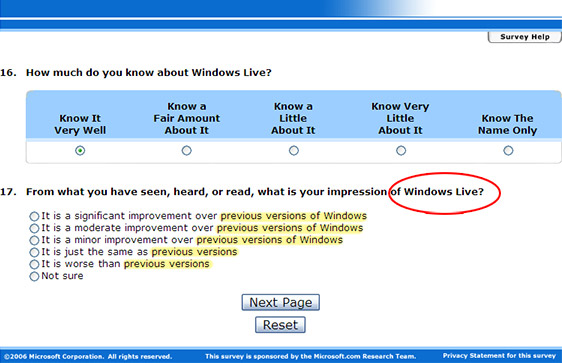

According to a Microsoft Research sponsored survey for the Microsoft website, Microsoft.com, it assumes Windows Live is the next version of Windows. Did anyone with any actual knowledge about Windows Live or Windows look over the content of this survey?

I hear Windows Live is a minor improvement over Windows XP. Only a teeny weeny wittle bit better. Not much. Just a wittle bit. There are three positive choices and only one negative. Can everyone say “bias”?

ADDITION:

A reader by the name of Robert Banghart who shares my thoughts on the quality of Microsoft surveys expressed his views to me in an email. I thought his views were extremely appropriate to the issues I have discovered today, so I’ll share them here. And it’ll also serve as an open letter to the people who manages this survey and every other Microsoft survey.

Robert says, “Unfortunately, I have found major deficiencies in just about every one of the surveys I have taken. They often don’t ask open questions, limit the available choices, and don’t provide an ‘other’ choice.”

A survey shouldn’t feel like an assessment marking sheet in a school. I shouldn’t feel like I’m trying to get someone a raise for doing such a great job. For example, if I were to respond by ranking the search feature as extremely poor, there are no prompts to ask me why it is so, or how it can be improved. It’s almost like they just want to know what number I rank them on, but wouldn’t care less about what would make that feature better.

Robert says, “Even when they do and when they provide comment text boxes, they invariably put some arbitrary and undisclosed limit on the number of text characters that can be put in the comment box.”

Robert says, “I’ve resigned myself to thinking that my efforts are simply scraped off by the (third party) survey-taker before the other answers are passed on to Microsoft.”

Guess which questions are more likely scraped off? And how is this ever so related to the Microsoft.com website?

I guess that’s the problem when companies deal with third-parties that act on their behalf. They don’t really care about gathering feedback to improve a product or service, they just want to collect responses.

Update: Surprisingly, the survey has been silently updated with the correct question, “From what you have seen, heard, or read, what is your impression of Windows Vista?”.

Update update: Actually the problem hasn’t been fixed. The problem is actually due to the survey asking random questions. It asks a range of alternative questions including “Windows Mesa, Windows Live and Windows Vista”. Although the following page does not reflect that Windows Live is not an operating system.

If I get you right, you’re saying that they shouldn’t be asking this question because they should know that Vista will be teh awesomezx0r?

No. They asked Windows Live as an operating system.

Ha Long i like your last comment in the box!

Microsoft does a lot of surveys of partners and end-users. Personally, I will probably fill out a dozen or more such surveys this month in either digital or paper form. Since this is a typical month, I’ll probably fill out close to 150 Microsoft surveys this year – especially with Vista and Office 2007 launches coming up.

Many of the surveys disclose that they are done on behalf of Microsoft by third-party providers. As already noted in Long’s post, it often seems like the survey writers don’t know anything about the product, platform, etc. that they are surveying about. The surveys also appear to be written by people who know nothing about how to write surveys. If those people don’t know how to write valid survey questions I think it is fair to assume that they have no concept of survey analytics. As a result, they waste the survey-takers time, bilk Microsoft out of money, and rely on garbage out analytics from garbage in set-up.

Microsoft isn’t the only purveyor of shoddy surveys. For example, see the post at http://www.juiceanalytics.com/weblog/?p=252 (full disclosure: I have no relationship to the post or juice analytics other than I subscribe to the blog).

On the other hand, just because others are guilty doesn’t mean Microsoft gets a free ride on this. Relying on bogus survey returns hurts their ability to meet partner and end-user needs. So, as I take the time to fill in my 150 plus or minus surveys, I know that the odds of Microsoft, and therefore me, benefiting from doing so is slim at best.

My guess is that once someone within Microsoft whose province includes control of these surveys, or a subset of them, becomes vividly aware of the wasted effort, they will take the steps necessary to improve them. Hey, maybe they could even bring some of the new Microsoft BI software to bear on the issues.

Finding those within Microsoft responsible and making them aware has been a problem up to this point and one that I have unsuccessfully attempted via face to face comments, and emails -including at the VP level.

When Scoble was around I was able to successfully enlist his aide in addressing such issues. After he found the right person within Microsoft and he or she was made aware, it was always my experience that they jumped on any issue with merit and made things happen.

I am glad that Long Zheng has untaken to see if we can find the right people within Microsoft on this issue. After all, improved survey results will benefit all and that is all I want.

It’s obvious that they are simply reusing the same form for all their services/ products. While some questions may seem stupid in certain contexts, it’s a way of saving developer money by NOT rewriting a new survey for each product. Usability or attention to detail have NEVER been strong points at Microshaft.