Hallucination is generally a very bad thing, especially when small mystical creatures are involved. But Microsoft Research has published a paper today that makes hallucination a lot more interesting, and a lot more useful. It’s a set of algorithms, tools and magic when combined, allows users to ‘hallucinate’ high-dynamic range in low-dynamic range photographs. What they can do will blow every HDR photographer away, which is becoming the fad of the decade.

Hallucination is generally a very bad thing, especially when small mystical creatures are involved. But Microsoft Research has published a paper today that makes hallucination a lot more interesting, and a lot more useful. It’s a set of algorithms, tools and magic when combined, allows users to ‘hallucinate’ high-dynamic range in low-dynamic range photographs. What they can do will blow every HDR photographer away, which is becoming the fad of the decade.

The researchers Lvdi Wang; Li-Yi Wei; Kun Zhou; Baining Guo; Heung-Yeung Shum describes in the “High Dynamic Range Image Hallucination” paper,

We introduce high dynamic range image hallucination for adding high dynamic range details to the over-exposed and under-exposed regions of a low dynamic range image. Our method is based on a simple assumption: there exist high quality patches in the image with similar textures as the regions that are over or under exposed. Hence, we can add high dynamic range details to a region by simply transferring texture details from another patch that may be under different illumination levels.

What they can do is simply amazing. Check out this demo video! I promise you won’t be disappointed.

If you don’t want to watch the video, then have a look at these screen captures (which are featured in the video).

Texture sample (left). Original image (middle). Original image with ‘Hallucination’ processing (right).

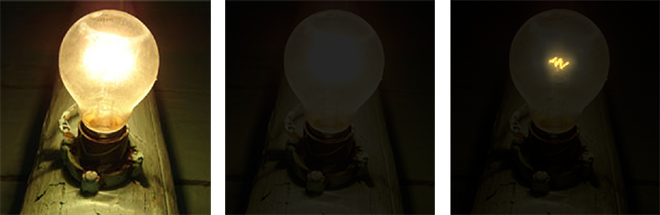

Original image (left). Original image under-exposed (middle). Original image under-exposed with ‘Hallucination’ processing (right).

Original image (left). Original image under-exposed (middle). Original image under-exposed with ‘Hallucination’ processing (right).

Original image (left). Original image under-exposed (middle). Original image under-exposed with ‘Hallucination’ processing (right).

It’s so simple that anyone could add HDR to LDR photographs, just even two brush stroke can recreate the effect what normally takes time and effort to take bracketed photographs, then post-processing in Photoshop or other HDR editing tools. I can’t wait to see any commercial applications taking up their research and embedding it into image editing tools.

Oh, and did I tell you they can do this for video? 😉

Very interesting 🙂

Microsoft is approaching design to a “friendly” level…

I don’t know if that’s good for designers, but this is a great tool.

ahem… you could always under expose the original image by 2 stops and then push it by 2 stops in post with the blow out regions masked.

This is probably a nice toy for amateurs but I doubt a lot of professional photographers will buy into this.

It seems to me that Microsoft most of the time does their own thing and then tries to push the results onto professionals. Perhaps they should take a lesson from Adobe and listen to how professionals actually work.

@tom: If you’re talking about actually taking 2 shots, then that’s a lot of work and you might not always have the opportunity to do so.

If you’re talking about trying to ‘fake’ a HDR by manually under-exposing a photograph in post-processing, then you’re always going to have some bits without detail. This is kind of like that, but it goes one step further by trying to fill in the bits without detail.

I think it’s a toy for both amateurs and professionals, one doesn’t have to discriminate the other.

Looks excellent!

More than one photo or HDR fall flat with moving subjects, so I presume this method will provide hope there, but in the end a better sensor equal to the eye is the real answer, and these tools will help even more.

That is very nice indeed. Maybe this technology would go into the Expression-brand from Microsoft?

This looks ideal for people like me, who regularly take snapshots that have exposure issues. I doubt there will be a big uptake by professionals, but home users will certainly benefit.

Speaking of professionals, check out Sean McHugh’s Cambridge in Colour website which demonstrates HDRI and other techniques very well indeed.

Must agree, VERY impressive indeed. Have tried the underexposure mask approach, but often creates noise. Obviously the best HDR images will always be produced with bracketed exposure shots. This has the advantage tough, of being able to ‘fix’ even previously images that was not taken with HDR in mind. Also, bracketed exposures get tough with animate objects. Like it a lot.

Long, I think tom was talking about taking one under exposed photo, and then add brightness etc.

This is awesome!! Thanks for sharing!

There’s just one thing we don’t know about this yet. What kind of processing horsepower is needed, and how long does it take on that baseline machine?

Hey folks:

I am the main guy responsible for this technology.

The system is pretty light weight in my opinion.

The video has shown some life captured user interaction with our system. As shown our system responds to user interactions in real time. The machine we used is a Dell OptiPlex 745 Desktop with Core2Duo 2.13GHz / 2G Memory / Intel Q965 Express Chipset. Right now all computations are done on a CPU and no GPU is involved (yet).

Hello…

Very good article

here’s more…

Impressive. Are they also working on putting together two LDR images of different exposures into one HDR image?

There was a nice PDF paper on reddit a while ago about displaying HDR images on an LDR display – when an area was overexposed (e.g. a window from an inside view of a church), it took that part from an LDR photo with low exposure. When it was underexposed, it took that part from an LDR photo with high exposure. ==> Beauty.

Has this project died?